Looking at LLMs through a Finance Lens (3): How much risk can we really manage for things that haven’t happened yet?

ภาษาอื่น / Other language: English · ไทย

I just got back to writing this after last month. In the first two posts, we talked about how finance created tools like VaR and Expected Shortfall not because the formulas are elegant, but because humans fear “bad days” more than averages. We also started to see that LLMs differ from financial systems in one key way: they exist in a world with adversaries—people constantly trying to push systems from VaR-type failures into ES-type failures.

This post continues from there by asking a bigger question:

What are the unavoidable limitations of all risk measurement tools—including the best ones finance has ever built?

When the ruler no longer matches reality

In 2007, even before the crisis reached its peak, David Viniar (CFO of Goldman Sachs) gave an interview to the Financial Times explaining what was happening to the firm’s funds.

He said:

“We are seeing 25-standard deviation moves, several days in a row.”

This kind of explanation is familiar language in risk management.

It sounds scientific—but if you think about it, it implies something much more unsettling.

Imagine sigma as the “normal scale” of market movement:

- 1-sigma → normal daily fluctuation

- 3-sigma → unusual

- 5-sigma → once-in-a-lifetime

So what is 25-sigma?

Under a Gaussian assumption, it corresponds to a probability of roughly 3 × 10⁻¹³⁸ per day, or an expected waiting time of around 10¹³⁵ years—far longer than the age of the universe.

But Viniar didn’t say it happened once. He said:

“Several days in a row.”

At first, it sounds like extreme bad luck.

But what it really implies is this:

the ruler used to measure normal days was never designed for days like that.

A 25-sigma result doesn’t mean reality became absurd—it means the model and reality stopped matching.

The real question

So the right question is not:

“Where did we miscalculate?”

But:

“Is the ruler we’re using appropriate for the real world?”

All the tools we’ve discussed—VaR, Expected Shortfall, stress testing, simulation—share one deep commonality:

They are all calibrated using the past.

Even forward-looking tools like stress tests still depend on historical volatility, correlations, and expert judgment—all anchored in what has already happened, under the assumption that the future will resemble patterns we can imagine.

This is not a flaw in the designers.

Not a mistake in the formulas.

It is a structural limitation.

The problem is that the most dangerous risks often do not follow the past.

Further reading: Dowd, K., Cotter, J., Humphrey, C., & Woods, M. (2008). How unlucky is 25-sigma? Journal of Portfolio Management, 34(4), 76–80.

After 2008: accepting model risk

After the 2008 crisis, the key lesson wasn’t just to find better formulas.

It was to accept that no model escapes model risk.

And more importantly:

We must never forget that the ruler we rely on may completely fail when the world shifts beyond its assumptions.

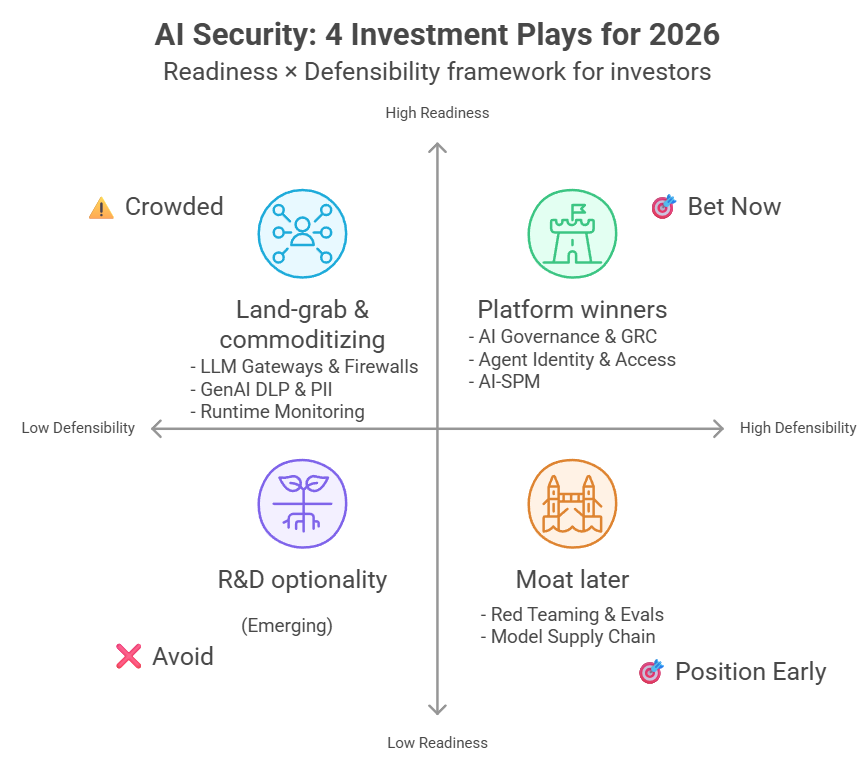

Bringing this into the LLM world

This connects directly to LLM systems.

We are using rulers designed for “normal days” to measure systems with three properties at once:

- Stochasticity — outputs are not identical each time

- Privilege — the system can access and act on important data and tools

- Adversary — unlike finance, there are actors actively trying to push the system into failure

Financial markets do have adversarial behaviors (price manipulation, spoofing, front-running), but the difference is targeting.

In LLMs, attacks are designed specifically for the system’s behavior and attack surface—not just exploiting market inefficiencies, but actively searching for failure modes embedded in the system itself.

From fat tails to adversarial tails

Statistically, this resembles fat tails—extreme events happening more often than expected.

But LLMs go beyond that:

- The distribution is non-stationary (it keeps changing)

- There are active agents pulling the tail outward

Even worse, some risks are not just rare events. They are:

Discovered, repeatable vulnerabilities waiting to be reused.

Why benchmarks are not enough

Testing LLMs with standard benchmarks is like backtesting a portfolio during calm market periods.

It tells you how the system behaves under normal conditions—but not how it breaks under pressure.

That’s why adversarial evaluations are emerging: to simulate attackers who adapt and search for weaknesses.

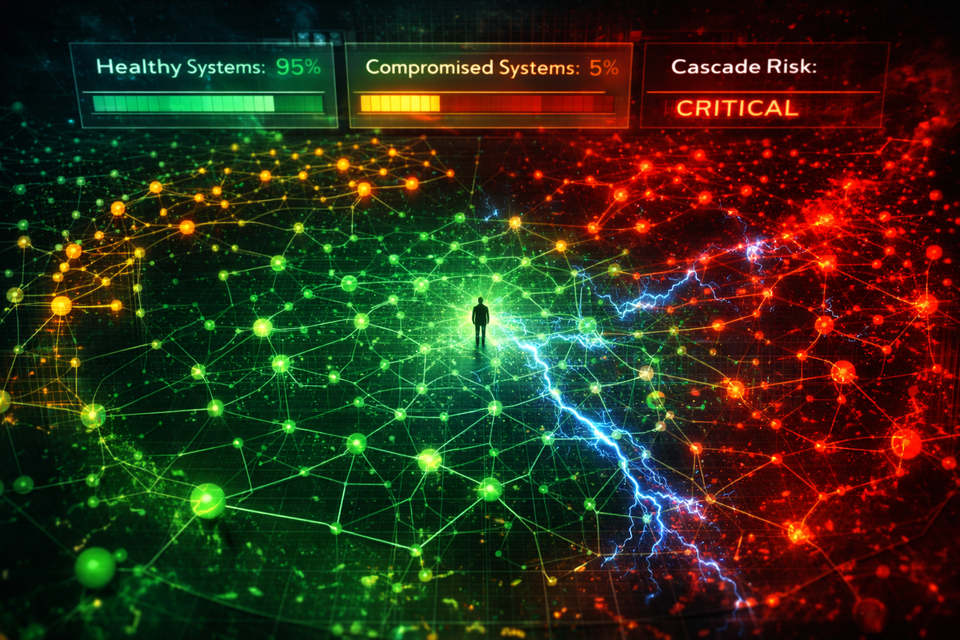

When scale makes failure inevitable

As systems grow large enough, the failure of our “ruler” becomes unavoidable.

In probability, rare events become inevitable with enough time and scale.

When LLMs are deployed:

- to millions of users

- over long periods

- with real system integrations

Three things happen simultaneously:

1. Time

Rare edge cases eventually occur.

A system tested for hundreds of hours can still fail in month four—because testing has scope limits, but reality doesn’t.

2. People

A more diverse population—including adversarial users—interacts with the system in unexpected ways.

Not just direct jailbreaks, but:

- context manipulation

- language tricks

- role-play

- malicious documents

There’s also an incentive mismatch:

- Testing teams stop when scope is complete

- Real users keep exploring (they’ve paid for it)

Ironically, paying users may explore systems more deeply than bounded test teams.

Even bug bounty programs don’t fully solve this:

- high-skill individuals often don’t participate due to friction

- yet they still use the system—and may discover vulnerabilities anyway

3. Propagation

This is no longer probability—it becomes contagion.

Once a vulnerability is discovered:

- it spreads via Discord, Reddit, templates

- it evolves and mutates

Unlike random failures, exploits have:

- memory

- learning

- replication

Behavioral vulnerabilities in LLMs rarely disappear completely. Even if one pattern is patched, the underlying principle often survives and reappears in new forms.

What once required expertise becomes copy-paste.

The question changes

The question is no longer:

“Will something bad happen?”

But:

“When will it happen?”

The lesson from finance

Even with sophisticated tools, if the underlying assumptions are wrong, the numbers only create a false sense of safety.

The real risk remains in the blind spots.

In LLM systems, the issue goes further:

We don’t just use the ruler—we turn it into a target.

Benchmarks, defense rates, evaluation scores become optimization goals rather than tools for understanding risk.

And when that happens, something deeper breaks:

- developers optimize for metrics

- evaluators optimize for metrics

- attackers optimize against metrics

A measurement designed to observe risk becomes a mechanism that distorts risk.

This is Goodhart’s Law.

And that’s where the next post begins.

English translation by ChatGPT (GPT-5.2), from the original Thai text.